Announcing H.264 Support in Foxglove

Leverage powerful video codecs to substantially reduce your storage requirements

High-resolution image sensors are becoming more accessible and common in robotics systems. These sensors bring new perception capabilities and challenges. Larger images require more memory, compute, and GPU to process; they also take up more space and I/O bandwidth. Image data is often critical for debugging, so robotics teams historically have had to accept these storage impacts.

Foxglove’s new H.264 support helps robotics teams benefit from advances in higher-resolution sensors while reducing storage impact. Record H.264-encoded video streams in MCAP or bag files, and visualize them with overlaid annotations and 3D markers alongside your other log data.

Getting started with H.264 data

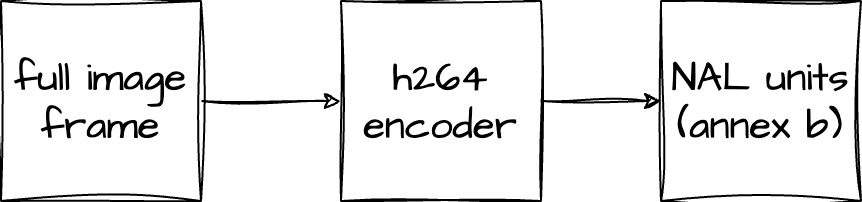

The typical H.264 video pipeline consists of an input source that produces full raw image frames. These frames are then fed into an encoder (hardware or software), which outputs one or more NAL units.

These NAL units must be written to your log in a format that Foxglove will understand. For this, we’ve created the foxglove.CompressedVideo message schema – set the format field to “h264”, and the data field to contain the NAL units in Annex B format.

Once these CompressedVideo messages have been recorded to an MCAP file, drag and drop the file into Foxglove, add an Image panel to your layout, and open the panel settings to select your video topic (e.g. /camera):

Depending on where you are in playback, you may need to play a few frames to get a keyframe:

CompressedVideo messages are viewable in the Image and 3D panels, so you can leverage these panels’ existing features – like the ability to overlay 2D annotations, render projected 3D scene entities, or even right-click the image to download the full frame.

H.264 video support works with a variety of data sources – including local files, Foxglove WebSocket connections, and Foxglove data streaming – that all benefit from the reduction in bandwidth.

Experiment with ROS image transport

For ROS 2, there is a community-contributed open source image transport package that uses ffmpeg to encode H.264 video.

To experiment with video in your existing image pipeline, you can adapt this package to publish foxglove_msgs/msg/CompressedVideo messages.

Tips for recording H.264 video for Foxglove

H.264 video encoders offer an enormous amount of configuration options to tune their output. Experiment with various bitrate, keyframe, and profile settings to achieve a balance between quality and storage space that is appropriate for your application.

Here are some tips for getting a good experience with Foxglove:

- Use Annex B NAL units! AVCC is not supported. Most software and hardware encoders can be configured to output Annex B – for some, it is the default.

- Most encoders have an API that takes a full frame as input and outputs one or more NAL units. For every full frame input API call you make, create a

CompressedVideooutput message containing all the output NAL units. This captures every input frame into aCompressedVideomessage with the appropriate NAL units, so that feeding it back through a decoder will recreate the input frame. - Be mindful of how often you produce keyframes (full image frames). While keyframes do use image compression techniques (similar to a JPEG or PNG), they take up more space than delta frames. On the other hand, delta frames have to be applied in order (starting from the most recent keyframe) to produce a full frame. If you have a keyframe every second, you’ll only ever have to look back 1 second at most to apply the relevant delta frames. If you have keyframes every 5 seconds, you’ll have to look back further to recreate full frames, but the overall video stream will take up less storage space.

- Avoid B frames – they will add latency when decoding in Foxglove. If supported by your encoder, use the “BASELINE” h264 profile. Otherwise, go into your encoder settings to disable frame reordering and/or B frames.

Stay tuned

We’re excited to continue extending Foxglove’s video support – let us know if you have questions and feedback.

Check out the 3D panel and Image panel docs for more information about their video support.

You can also join our Discord community or follow us on Twitter and LinkedIn to stay up-to-date on all Foxglove news and releases.