Robots are compound systems navigating complex worlds. To understand how they interact with other players in their environments, we can use mathematical operations called transformations. Transformations (or more simply, “transforms”) express an object’s position and orientation in relation to another.

In this tutorial, we’ll cover how transforms describe the worlds that our robots navigate – not only by positioning their components in relation to each other, but also in relation to the objects they encounter.

Situating objects with frames

In robotics, a frame refers to the coordinate system describing an object’s position and orientation, typically along x, y, and z axes. ROS requires each frame to have its own unique frame_id, and a typical scene consists of multiple frames for each robot component (i.e. limb, sensor), detected object, and player in the robot’s world.

A scene always has one base frame – usually named world or map – that is an unmoving constant. All other frames in the scene – including the robot’s own frame, which ROS typically calls base_link – are children to this base frame, and are positioned relative to this parent in some way.

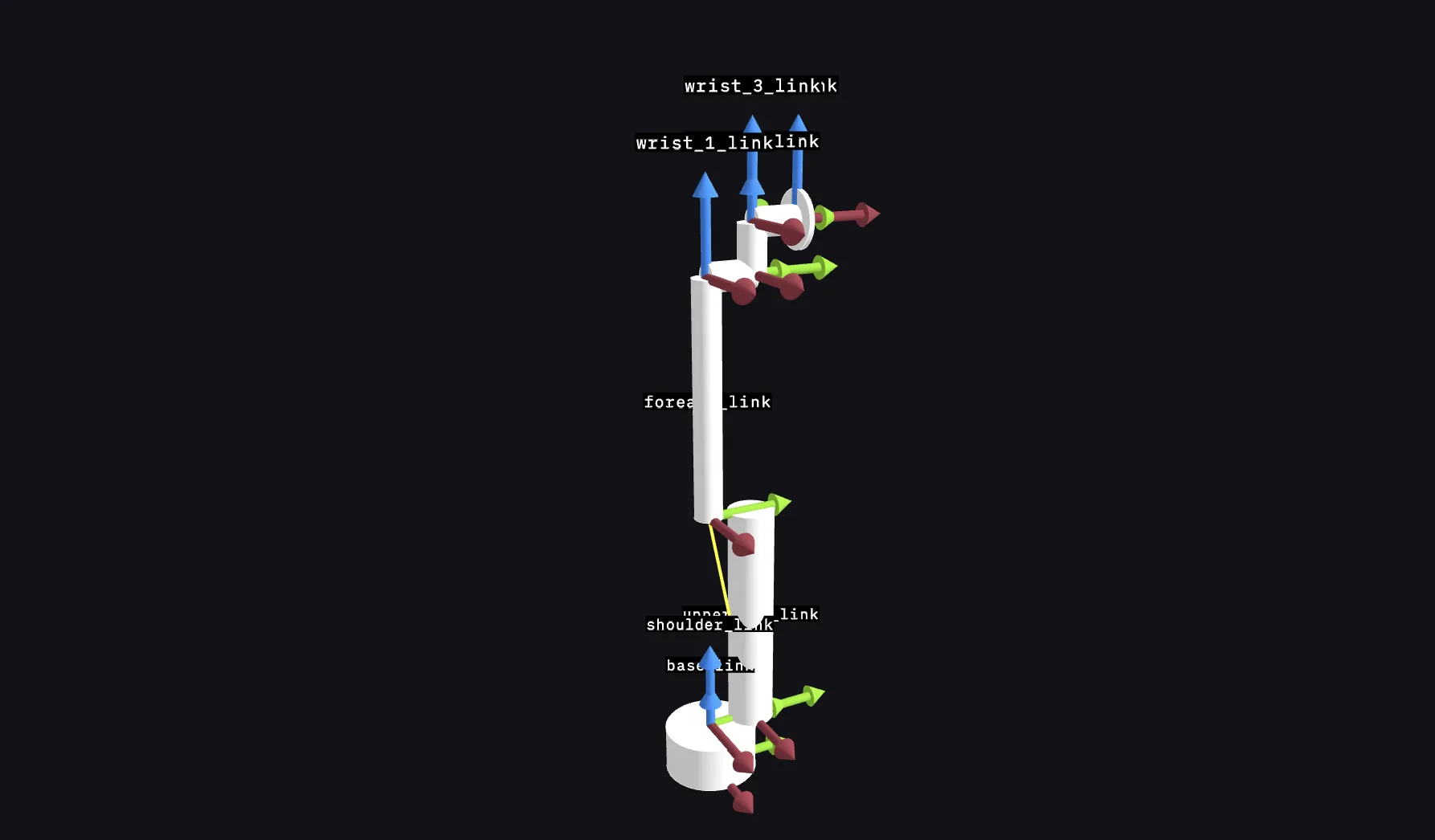

The robot’s base_link frame usually starts at its base and branches off into child frames for limbs and sensors:

Visualizing the UR5 collaborative robot arm in Foxglove’s 3D panel. Each frame’s x, y, and z axes are denoted by red, blue, and green arrows.

Each sensor’s frames can also branch off into further child frames for the objects they detect. In this way, all objects in a given scene are somehow defined in relation to the base frame and its child frames.

Connecting frames with transforms

Transforms define the translations and rotations needed to get from a source frame to a target frame – whether it’s parent-to-child, child-to-parent, or across multiple “generations” of frames. A complete set of a scene’s transforms, from the base frame to all its related children, constitutes a transform tree.

With a transform tree, you can quickly find the position and orientation of any given frame in the scene, no matter how many levels removed you are from the base. Transforms can be either static or mobile – static transforms (e.g. between base_link and a sensor_link frame) remain constant, while mobile transforms (e.g. between base_link and either a robotic arm mounted to the base or a separate detected object) can change as the world does.

In ROS, transform messages are broadcast on two topics – /tf_static (for static transforms) and /tf (for mobile transforms). This separation improves bandwidth and reduces the number of transforms being published. ROS also provides the tf2 library to help us calculate transformations between frames.

In the real world

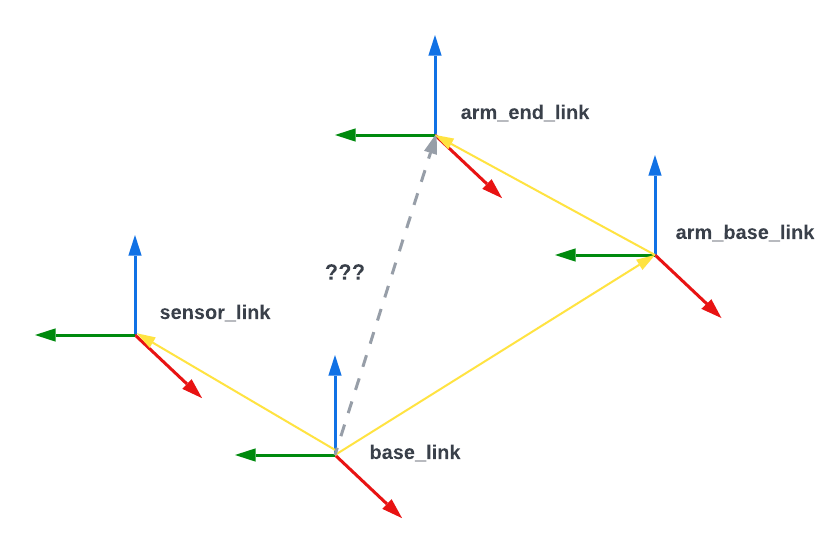

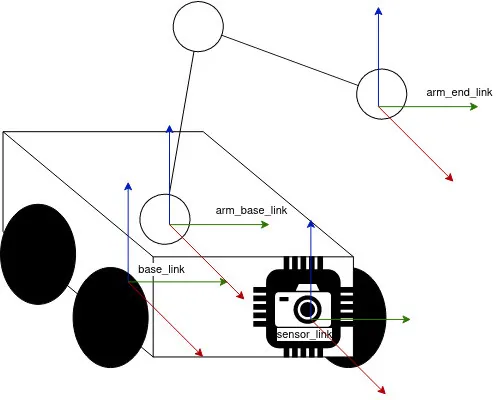

Let’s imagine a rover with a camera and robotic arm, navigating a flat maze:

We can see that the rover’s base_link frame has static transforms to two child frames – a sensor_link frame representing the unmoving camera mounted to the front, and an arm_base_link frame representing the unmoving arm base mounted to the top.

We also see that the arm_base_link frame has a mobile transform to its own child frame – an arm_end_link frame representing the moving arm.

Building the transform tree

While ROS’s tf2 library provides an easy way for us to calculate transforms, let’s take a peek at what this library is actually doing under the hood.

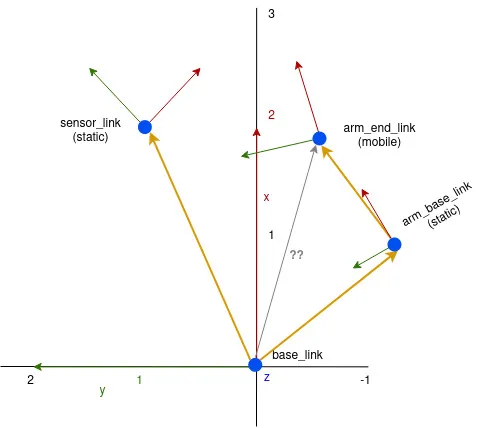

Each child frame has a transform that represents its position vector and rotation in relation to its parent frame:

- Parent:

base_linksensor_link–(2,1)position,-45°(π/4) rotationarm_base_link–(1,-1)position,30º(π/6) rotation

- Parent:

arm_base_linkarm_end_link–(1,1)position, no rotation

Given all this information, we can now use transforms to deduce arm_end_link’s position in the base_link frame!

Calculating an object’s position using transforms

To start traversing our transform tree (from base_link to arm_base_link to arm_end_link), we need two pieces of information:

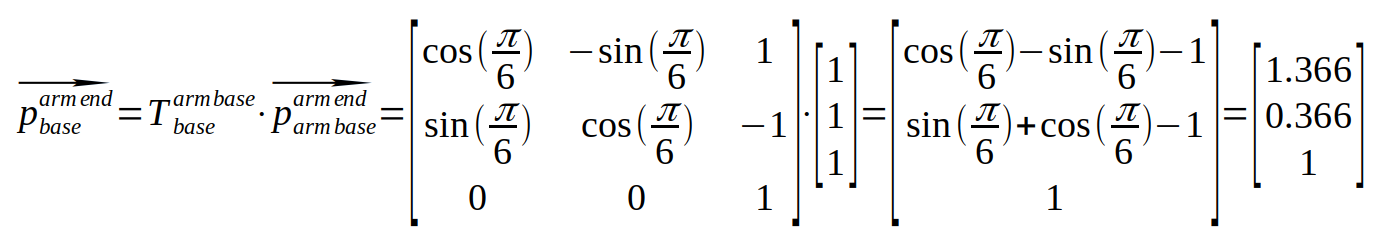

T- 2D transform matrix betweenbase_linkandarm_base_link(translation ofdxoverxanddyovery, rotation overz)p– Position vector forarm_end_linkin thearm_base_linkframe

Let’s set the following values in T matrix:

Φ = π/6– Rotation ofarm_base_linkinbase_linkdx = 1– Position (x) ofarm_base_linkinbase_linkdy = -1– Position (y) ofarm_base_linkinbase_link

And the following values in the p matrix:

x = 1– Position (x) ofarm_end_linkinarm_base_linky = 1– Position (y) ofarm_end_linkinarm_base_link

Finally, let’s multiple these matrices together to get arm_end_link’s position in the base_link frame:

With our operation, we find that arm_end_link is in position (1.366, 0,366) of the base_link frame!

Since arm_end_link is a mobile frame, it’s important to note that this result is only valid at the robotic arm’s current position and angle. As the transform between arm_base_link and arm_end_link inevitably changes, so will arm_end_link’s position in space.

Learn more

Actual robots in the real world are often much more complex than our single-sensor example, and must navigate much trickier terrain than a flat maze. Calculating an object’s position in its environment will often require multiplying more than two matrices to travel from a source frame to a target frame.

Fortunately for us, ROS provides the tf2 library to do the necessary transformation matrix math for us, so we can avoid doing these manual calculations ourselves!

Stay tuned for our next tutorial on how to use these libraries to publish and view transforms in Foxglove. As always, feel free to ask questions, share feedback, and request a topic for the next blog post in our Discord community!